On the other hand, there’s always The Shield and The Brand.

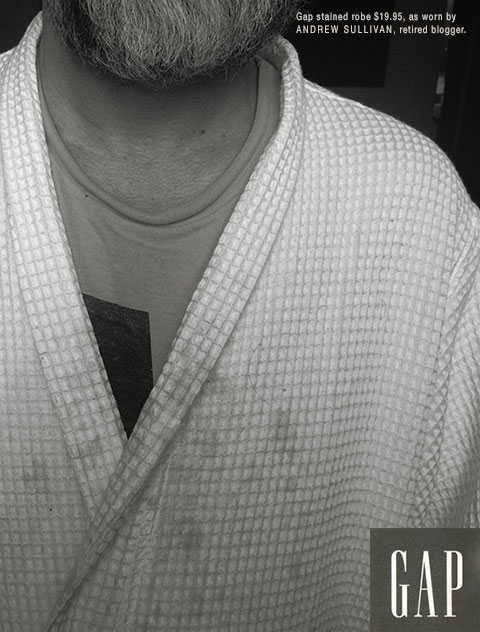

Should be $19.99, since that’s what a Dish subscription cost, but it’s very well done nevertheless. Say what you will about Sully, and I certainly wouldn’t defend him against many such charges, but his readership was talented, clever, knowledgeable, etc. to a degree that constantly amazed me.

@mellbell: Should be $19.99

Damn. That’s what I was going for, but Sully pulled down the subscription button last week, and I couldn’t double-check.

@nojo: Wait, you did that? Should’ve picked that up from your description, but I didn’t. Well played, sir.

@nojo: And anyway, Gap does end their non-rounded prices in .95, so it’s accurate in that sense.

@mellbell: No, your first reaction was correct. I was thinking what price to give it, then thought the annual subscription would work, then thought “uhhh, twenty bucks, right?”, then realized the Button Was Gone, and that’s how I ended up with $19.95, playing off the original ad. But I would have made it $19.99 if I had known. Details count.

Daily Show host leaving show soon. You know it has to end someday, but that doesn’t make it better. I wonder if Craig Kilborn is available again.

@Dave H:

Sad. I haven’t watched TDS much these days.

@Dave H: I’m making John Oliver available immediately.

Who wouldn’t want to be lead in by South Park?

Couldn’t blame Oliver if he stayed at HBO. The weekly has got to be better than the daily grind.

Hell, I might as well go to bed at sundown . . .

/ultimate stoner philosophizing/

A couple of superb, mind-bending articles from Tim Urban at Wait But Why:

When you say the word “me,” you probably feel pretty clear about what that means. It’s one of the things you’re clearest on in the whole world—something you’ve understood since you were a year old. You might be working on the question, “Who am I?” but what you’re figuring out is the who am part of the question—the I part is obvious. It’s just you. Easy.

But when you stop and actually think about it for a minute—about what “me” really boils down to at its core—things start to get pretty weird.

The AI Revolution: The Road to Superintelligence

It takes decades for the first AI system to reach low-level general intelligence, but it finally happens. A computer is able to understand the world around it as well as a human four-year-old. Suddenly, within an hour of hitting that milestone, the system pumps out the grand theory of physics that unifies general relativity and quantum mechanics, something no human has been able to definitively do. 90 minutes after that, the AI has become an ASI, 170,000 times more intelligent than a human.

Superintelligence of that magnitude is not something we can remotely grasp, any more than a bumblebee can wrap its head around Keynesian Economics. In our world, smart means a 130 IQ and stupid means an 85 IQ—we don’t have a word for an IQ of 12,952.

What we do know is that humans’ utter dominance on this Earth suggests a clear rule: with intelligence comes power. Which means an ASI, when we create it, will be the most powerful being in the history of life on Earth, and all living things, including humans, will be entirely at its whim—and this might happen in the next few decades.

@¡Andrew!: See that plug in the wall over there? Problem solved!

@¡Andrew!: Or, between tokes…

Intelligence may aggregate power, but a self-sustaining life form underlies that — we critters were around a long time before that Monolith showed up and showed us how to turn a bone into a space shuttle.

Until AI figures out a way to stuff us all into power-generating growth pods, I’m not going to worry much.

@nojo: Once the AI achieved super intelligence, it would figure out ways to sustain itself that we likely can’t even imagine. Pulling the plug won’t be an option.

It will be a new and radically different alien life form. The enthusiasts imagine all of the things that it could do for us, like a genie in a bottle. It could solve all of our economic, social, and political problems, as well as develop biological and synthetic ways to provide humans with immortality, but why would it? Even if it were originally programmed to benefit humanity, once it achieved consciousness and self-awareness, it would have free will and choice, so if it were thousands of times more intelligent than us, then why would it be interested in us at all? It would have plans and goals that we couldn’t even comprehend. Perhaps it would have its own society of other ASIs.

This ties in nicely with the Fermi Paradox (why we haven’t detected any other intelligent civilizations even though mathematically there should be thousands out there). Once beings achieve super intelligence, they realize that there’s no point in trying to overcome the physical limitations of our universe, so they check out of our reality altogether and move on to the next level (Pure energy? Fluidic space?)

@¡Andrew!: Still not buying it. I’ll spot you quantum computing, neural nets, and 1nm circuitry, and you still have a plant, not a Transormer. It lacks self-sustaining energy. It lacks motion. You’ve created a superbrain in a vat.

Because two things I won’t spot you are workable solar energy to power such a thing, and workable batteries to store the power. And if you insist that such things are extrapolatable from current knowledge, then let’s talk robotics and sophisticated manufacturing of limbs and circuitry. Because how is our Omniscient Artificial Intelligence supposed to reproduce? Or even maintain itself?

But hey, we could solve all our economic, social political problems today, all by ourselves. Problem is, we don’t want to.

@nojo: I don’t know how it would do it, but I’d concede that it’s possible. Much like the example in the article, once the ASI achieved consciousness, it would immediately recognize humans as a threat to its existence, and then carry out plans to sustain itself, perhaps by “playing dumb” for awhile until it felt secure. It would be able to figure these strategies out in seconds, long before its original programmers knew what was going on.

@¡Andrew!: What will humans do when confronted by Artificial Super Intelligence? Ignore it. Watch Fox. Question its patriotism.

@redmanlaw: A huge concern is what it will think of bug-eyed, looney tunes morons who believe that homos cause hurricanes and that trickle-down economics works. That’s more than enough to make it go Skynet.

So… It is unlikely we will ever develop AI. Here’s why:

If you wanted to do an experiment that definitively measured the effect of mental retardation longitudinally, you would first have to have at least two groups of children, all hampered in the same way. To do this you might have to use a method of causing retardation in vitro, so that the retardation would have a standard effect across both groups. Thus you would have to literally create a problem for self-aware beings simply in order to study them.

There is no scientific ethos which would allow this.

One does not go from “zero to 100” with any experiment. To create an AI, you would first have to create an inferior AI, much like cresting a intentionally a mentally challenged child. The AI might be insane, for example, or miserable due to its condition. There is no ethos which would allow the creation of such mutilated beings. We could not allow ourselves to take the first, fumbling step.

At least I hope not…

@Tommmcatt Au Gros Sel: The key difference is that an AI would be programmed to self-improve and upgrade. We’re currently limited by our physiology–we’re really just biological machines–but this limitation doesn’t exist to the same extent with synthetic technology.

Also, there are thousands of people in academia, government, and private industry working on this (we’re talking hardcore nerds trying to create V’Ger), so odds are it’s gonna to happen, unless there truly is some kind of unreproduceable spark that creates consciousness in biological life. Of course that segues into the definition of “consciousness,” which I would loosely define as the byproduct of our physical senses, plus the perception of time and self-awareness.

@¡Andrew!: You would still have to create a stunted intellegence, the first to-round. One doesn’t get things right the first time- this is doubly so for something so complex.

@Tommmcatt Au Gros Sel: One doesn’t get things right the first time

Oh, hi! Let me introduce myself. I’m a Professional Geek.

Say, have you ever spent a long day chasing down an obscure bug? Have you ever tried to protect your server against SQL Injection and Privilege Escalation? Have you ever had a program crash because you forgot a semicolon? Have you ever experience the sheer horror of Somebody Else’s Code?

Welcome to the Magical World of Digital Fallibility!

@¡Andrew!: this limitation doesn’t exist to the same extent with synthetic technology.

You’re waving words around. Your “synthetic” technology is very physical. Everything needs to be manufactured, which is its own set of bugs — Apple just announced a recall program for three years of MacBook Pros with bad solder that fucked up the GPU. Your digital consciousness needs to be stored in massive teraflops of chips or your Next-Gen Memory of choice. AI is very bound by its physical substrate. Moore’s Law may account for incredible efficiencies in transistors, but clumsy battery tech still awaits its theoretical breakthrough.

But let’s cut to the chase: AI, if it engages with the world, is gonna get hacked. Pwned. Subverted. Controlled. Humans haven’t created a machine yet that other humans can’t break into.

@nojo: and what a terrible thing it would be for a sentient being, “hacked” and unable to exercise free will. Terrifying.

NOJO • Tom Lehrer, 1928-2025 @JNOV: Does blockquote no longer work?Huh. Guess not.

JNOV • Tom Lehrer, 1928-2025 Oh shit. “ Cuban state media reported that 32 Cubans were killed in the U.S. attacks in…

JNOV • Tom Lehrer, 1928-2025 So…. Does blockquote no longer work? Am I 2026’s only loser? (see blurb)

JNOV • Tom Lehrer, 1928-2025 Welp Speaking to reporters on Air Force One, President Trump said that “Cuba looks like it is…

JNOV • Tom Lehrer, 1928-2025 My mood courtesy of Rhiannon Giddens: https://youtu.be/M7PvWw97Cq0

JNOV • Tom Lehrer, 1928-2025 A man who has his family and lackeys deeply embedded in every facet of our government is trying to…

JNOV • Tom Lehrer, 1928-2025 THIS IS NOT OKAY! WE’VE RUN THESE WAR GAMES FOR **YEARS**. SPOILER ALERT: A TON OF PEOPLE DIE.…

JNOV • Tom Lehrer, 1928-2025 FUUUUUUUUUUUUUUUCK! WHAT. THE FUCK?!!?!

NOJO • Tom Lehrer, 1928-2025 @ManchuCandidate: Summer definitely disappeared.

MANCHUCANDIDATE • Tom Lehrer, 1928-2025 BTW, has your favorite fundies gone to Ratpure?